Can LLM reason part 2 – a bomb from Don Kunth

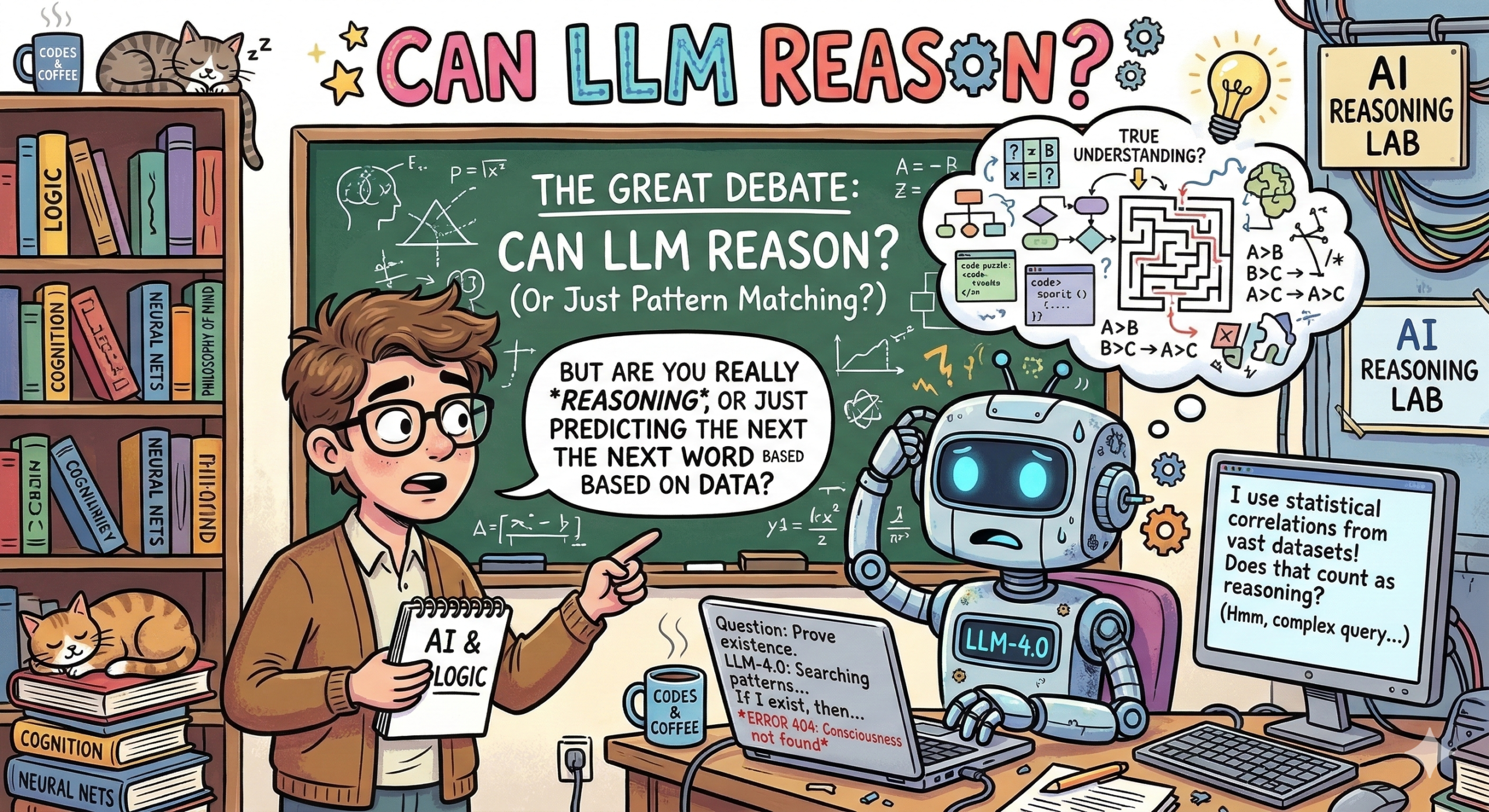

I recently wrote a post describing the dilemma faced by large language models: are they simply probabilistic machines predicting the next token, or do they possess a capacity to genuinely connect ideas through some form of internal mathematical or symbolic reasoning? This often boils down to methods like “saying it out loud,” similar to the popular “Chain of Thought” prompting, which forces the model to externalize its steps and, hopefully, engage in a more methodical process akin to reasoning.

Recently, however, a bomb shell was dropped by none other than computer science legend Don Knuth. Knuth, renowned for his foundational work The Art of Computer Programming and the creator of the TeX typesetting system, revealed that Anthropic’s flagship model, Claude 3 Opus, assisted him in solving the “Claude’s Cycle” problem. This wasn’t a simple task of code completion or data retrieval; it involved complex mathematical and algorithmic reasoning, an area where LLMs are often thought to be weakest. The involvement of a figure of Knuth’s stature, who embodies rigorous, precise algorithmic thinking, in validating an LLM’s capacity for complex problem-solving adds serious weight to the argument that these models are doing more than just sophisticated pattern matching.

Actually, I am equally shocked recently. I have been pretty sick lately and stayed home for some time. I love to read a few books or try a couple of ideas when I am bedridden. This time I am going back to vibe coding, trying to play with AI as a personal assistant. While some concerns persist, such as hallucination, the quality improves dramatically. I’ve been asking AI to write me a couple of scripts. To my surprise, the translations from high-level instructions to algorithms have been strengthened dramatically, and code quality skyrocketed compared to several months or a year ago. It truly saves my time. I am toying with Gemini a little bit; it’s not perfect and cannot fulfill all my tasks, but still, it’s capable of finding “some” needles from my haystack.

At the end, I rethink the topic: can LLM reason? Perhaps our learning patterns differ. To me, I am a logic and visual learner. I think in picture, logic, and math. However, I cannot eliminate that there are other kinds of learners who heavily rely on “languages,” such as most of my family members. All my family members (except me) are akin to learn through listening and “words.” Perhaps LLM is manifesting how language and words can scale, arriving at intelligence.

We are lucky to live in an age of change and explore the uncertain.

PS: You are reading this “new” post likely because I am still sick and spending my time with my MacBook Air M4 in the bed.